This Boston Globe article poses interesting questions about the legal and moral responsibilities of robots (yes, robots).

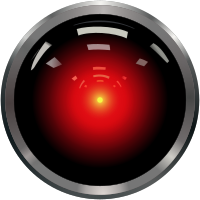

In summary, does the legal system need to adapt to adjudicating cases where artificial intelligence-run machines make decisions based on their own reasoning, as opposed to faulty programming? (Think HAL in 2001.) Some are arguing that robots with full autonomy “can and should be held criminally liable” for their actions. It other words, malice or intent aren’t at issue, but the action itself.

The implications will resonate as electronics manufacturing migrates to robot-operated manufacturing.

HAL is watching us. Do we need to watch HAL?